— Jean-Marie Hullot · NeXT · 1988 — Tracing a conceptual lineage

I. A quiet revolution

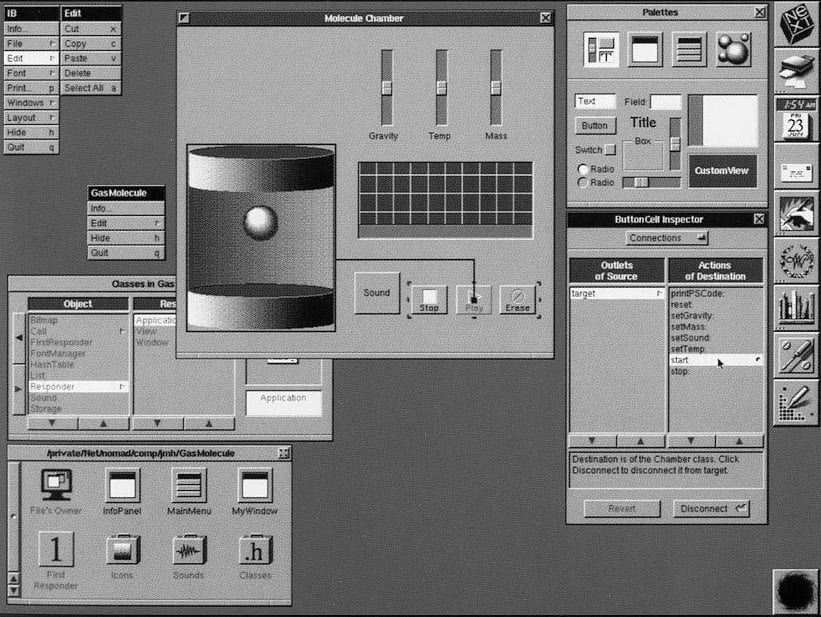

In 1988, Jean-Marie Hullot’s Interface Builder marked a genuine conceptual break, one whose significance has not yet been fully acknowledged. On Steve Jobs’s NeXT machines, a developer could for the first time see an interface take shape, connect buttons to code by drawing simple wires, and observe how the application behaved — without ever restarting the compiler. Programming ceased to be a solitary affair of text and became a visual, immediate, embodied dialogue.

The stakes went well beyond convenience. Interface Builder carried two ideas of deep intellectual coherence: on one hand, the mutualisation of code as a mode of collective construction; on the other, interactivity as a mode of thinking through development. Nearly forty years later, these two ideas find their logical continuation in large language models applied to programming.

A good tool does not interpose itself between intention and its realisation. It makes them coincide.

— Design principle, NeXT Software, 1990s

II. First paradigm: the mutualisation of code

The idea did not originate at NeXT. It was born at Xerox PARC in the 1970s, with Smalltalk — the first language to push the principle of object-oriented inheritance to its logical conclusion. Alan Kay and his colleagues had grasped something fundamental: if code can be organised into objects that build on their predecessors without rewriting them, then programming effort can be mutualised — written once, reused everywhere, enriched collectively.

Smalltalk went further still: its environment was not a program one compiled and relaunched, but a living image — a space of objects in permanent operation. Changing the code of a method in the class browser was enough for all objects currently running to adopt the new behaviour immediately, without interrupting the machine. Because message dispatch between objects was dynamic, modified code took effect at the next message send. One could literally repair a program while it was running. This principle — code as a living, immediately reactive material — is the founding intuition of all the interactivity that followed.

But Smalltalk remained the domain of researchers and specialists. It was NeXT that professionalised this paradigm by crystallising it into its APIs, Foundation and AppKit: stable, documented frameworks ready for industrial production. Developers no longer had to write the foundations of their applications themselves; they inherited a shared infrastructure and could focus their energy on what actually distinguished their work.

Interface Builder took a further, decisive step: it democratised this mutualisation down to the non-programmer. By placing an NSButton object on a canvas, even someone who could not read Objective-C was instantiating thousands of lines of code written, tested, and documented by others. They were not creating a button; they were invoking the button that an entire community had built before them, without needing to understand its internal mechanics.

This gesture — calling rather than writing, combining and arranging rather than building from scratch — reveals a simple economic truth: what has been mutualised no longer needs to be reinvented. What Smalltalk had theorised, what NeXT had industrialised, Interface Builder made accessible.

The genealogy

Smalltalk — invents mutualisation through inheritance and living code (image, dynamic dispatch). Reserved for researchers.

NeXT / AppKit — professionalises mutualisation. Accessible to expert developers.

Interface Builder — gives living code its visual dimension. Democratises access to non-programmers.

The invariant principle

At each stage, the circle of those who can benefit from mutualised code widens — without those entering that circle needing to understand what happens underneath. The power is preserved; the barrier to entry falls.

III. Second paradigm: interactivity as a mode of thinking

The second contribution of Interface Builder is perhaps even more radical — or more precisely, it is where it makes its most original contribution. The idea of immediately reactive code did not originate with it: Smalltalk had already inscribed it at the heart of its architecture, through its living image and dynamic message resolution. What Smalltalk had invented for the expert developer, Interface Builder was going to make visible and accessible.

Before Interface Builder, programming meant write, compile, run, observe — a linear, deferred cycle in which thought and result were separated by compilation time. Interface Builder broke this cycle in a new way: not merely through runtime dynamics, but through the visual gesture.

Thanks to the dynamic runtime of Objective-C — inherited directly from Smalltalk — and the IBAction/IBOutlet mechanism, a developer could connect a button to a method by drawing a line with the mouse. The effect was immediate, visible, reversible. Code and its graphical incarnation coexisted simultaneously, within the same mental space. One no longer described an interface: one shaped it.

This idea — that one understands better by manipulating than by reading, that one thinks better by interacting than by describing — is not specific to software. It is a mode of thinking whose roots trace back to Dewey, to Papert, and which cognitive scientists today call embodied cognition.

If there is any delay in that feedback loop, between thinking of something and seeing it, and building on it, then there is a whole world of ideas which will just never be. These are thoughts that we can’t think.

— Bret Victor, Inventing on Principle (2012)

IV. Personal perspective — Thirty years building interfaces for thinking

I discovered NeXT in 1988 and began using Interface Builder in 1989 as a tool that would free me from the repetitive constraints of building graphical interfaces. As a scientific computing engineer working alongside physicists Pierre Coullet and later Jean-René Chazottes, I came to understand its full significance. We were looking together for an answer to a question that was not, at its core, about computing: how to make equations and mathematics vivid, accessible, intuitive? How, at least initially, to set aside the formalism and give it immediacy?

My answer, developed over three decades at CNRS, has always been the same: through interactivity. Not interactivity as a pedagogical convenience, but as a mode of knowledge production.

Adjusting a parameter, changing an initial condition, shifting from one representation to another: each of these gestures brings a different facet of the same model into view. This is not a demonstration, but it is not mere illustration either. It is an exploratory space where intuition forms through direct contact with the phenomenon. One understands a Hopf bifurcation differently when one watches it prepare itself, then unfold under one’s hands: the equilibrium loses its stability, an oscillation emerges, the system changes regime. The theorem establishes the conditions for this transition; the interactive tool makes its internal dynamics perceptible.

Interactive visual simulations as instruments of thought

What I have developed — from the 70 simulations in the Dynamical Systems eBook by Chazottes and Monticelli to those for the probability MOOC at École Polytechnique, from the interactive visualisations for Images des maths to the Experimentarium Digitale — all proceed from the same principle: the numerical tool must not illustrate mathematics, it must make it practicable. It must lower the cognitive cost of access without betraying the rigour.

A researcher working with such tools to interrogate a Lorenz system is not watching the equation rendered as an image: they are putting the system to the test. They adjust a parameter, restart a trajectory from a nearby initial condition, observe the attractor deform, change the projection, go back, compare two regimes. Each manipulation is not merely a change of display; it is a question put to the model. Where a paper presents a stabilised result, the interactive tool makes perceptible the path by which an intuition forms: spotting a transition, suspecting a bifurcation, distinguishing what belongs to the phenomenon from what belongs to the representation. The researcher is not receiving a demonstration; they are building an active relationship with the system. This moment is not yet proof, nor a publication, nor even necessarily a result. But it belongs fully to the work of research: it is the exploratory moment when hypotheses become thinkable.

This parallel is not rhetorical. In both cases, what is at stake is a reduction of cognitive latency: the delay between intention and observation of its effect. The shorter this delay, the more freely thought can unfold. Interactivity is not a pedagogical luxury — it is a condition for creativity.

Interactive simulation (my practice)

The researcher manipulates parameters, initial conditions, viewpoints on the system — exploring a territory from multiple directions. Mathematics ceases to be a text to decipher and becomes an object one can hold, turn, and question.

The barrier is the symbolic formulation.

The analogy with Interface Builder

The developer wires a component, observes the effect in the interface, adjusts, and repeats. Code becomes a space for manipulation rather than a text to compile.

The barrier is the compile-run-debug cycle.

When LLMs reverse the perspective

For thirty years, I built tools so that others — researchers, students, pupils — could do mathematics without mastering programming. I was the craftsman of interactivity for others. I knew how to code; my tools freed those who did not.

LLMs applied to programming have reversed this asymmetry.

When I develop today with Claude Code — describing in plain language a simulation of a mathematical model, observing the generated code, correcting it with a sentence, watching the animation evolve — I am living exactly the experience I have sought to offer my users from the start. I have become the user of my own paradigm.

I was building interfaces so that mathematicians could model and explore their equations intuitively. LLMs now allow me to program intuitively myself.

The feedback loop is identical: describe an intention → observe a result → refine through conversation → observe again. This is not traditional software development. It is exploration — the same exploration I sought to make possible for differential equations or stochastic processes, now applied to code itself.

My interactive simulations

Slider → parameter → immediate observation of system behaviour. Mathematics becomes a tactile experience.

Interface Builder (1988)

Wiring → object-method connection → immediate observation of UI behaviour. Code becomes a visual experience.

LLMs for programming (today)

Description → generated code → observation → conversational refinement. Programming becomes a dialogic experience.

What Jean-Marie Hullot had achieved for graphical interfaces — making code manipulable without erasing its structure — LLMs are achieving for programming as a whole. For me, there is in this convergence something that resembles coherence: the same principles that guided me in building my tools are guiding, on a larger scale, the evolution of the act of programming itself.

V. LLMs as the culmination of both paradigms

The use of LLMs for programming now appears as the coherent continuation of what Smalltalk and Interface Builder had initiated. In this specific domain, large language models are not a break with computing’s past — they are its natural extension, carried to its ultimate consequence.

Mutualisation at the scale of the world’s code corpus

Smalltalk mutualised the code of a handful of researchers at Xerox PARC. NeXT’s APIs extended this to a professional community. Interface Builder opened it to non-programmers, able to assemble components without reading the code. LLMs specialised in programming cross the final frontier: they mutualise the entire collective knowledge in code — decades of public repositories, technical documentation, developer discussions, algorithmic papers — and open it to anyone who can formulate an intention in natural language.

When I ask Claude to write a program simulating the evolution of a Lotka-Volterra system using the RK4 numerical method — that is, fourth-order Runge-Kutta — my approach is of the same nature as that of the NeXT developer who instantiated an NSButton, or the Interface Builder user who dragged one onto a canvas. I am invoking a mutualised collective programming effort, without mastering all its internal mechanisms. What changes is the scale: planetary rather than communal, diachronic rather than synchronic. And what also changes is the barrier to entry: a sentence in plain language rather than a wiring gesture.

Interactivity carried to natural language

Interface Builder had substituted visual wiring for the compile-run-debug cycle. LLMs substitute conversation in natural language for the act of syntactic writing. The nature of latency changes: it is no longer the time of compilation or debugging — it is the time of formulation. The obstacle is no longer technical; it is semantic.

And what is remarkable is that this interactivity does not sacrifice structure. Just as Interface Builder allowed one to assemble components visually without relinquishing their internal richness, LLMs allow one to describe an intention in natural language without relinquishing the precision of the resulting code. The surface is natural; the depth remains rigorous.

Interface Builder (1988)

Mutualisation: Cocoa inheritance, shared framework.

Interactivity: visual wiring IBAction/IBOutlet.

LLMs for programming (2020–)

Mutualisation: world code corpus in the weights.

Interactivity: conversation in natural language.

VI. The continuity of an idea

Jean-Marie Hullot did not predict LLMs. But the two principles he embodied in Interface Builder — making the mutualisation of code accessible and making code immediately interactive — run through the history of computing as a continuous thread. That thread goes back further still: to Alan Kay and Smalltalk, who were the first to understand that object inheritance was a form of collective organisation of human intelligence.

What we observe over fifty years is a continuous democratisation of the act of programming: Smalltalk reserved the paradigm for researchers, NeXT opened it to expert developers, Interface Builder extended it to non-programmers, LLMs offer it to anyone who can formulate an intention in natural language. At each stage, the power is preserved — or amplified. Only the barrier to entry falls.

For those who, like me, have built their practice on the idea that understanding comes through manipulation, that exploration prepares demonstration, that latency between intention and observation is the enemy of thought — the use of LLMs for programming is a welcome surprise, but also a confirmation.

It confirms that interactivity was not a comfort feature in software development, but a mode of thinking whose reach extends well beyond software engineering. It applies to mathematics, to physics, and now to programming itself.

What I have sought to offer scientific users for thirty years, LLMs now give back to me: the possibility of thinking by doing, of understanding by exploring, of creating by conversing — applied this time to code itself.

Interface Builder had opened this path for graphical interfaces. LLMs open it for programming.

This essay was written as a personal reflection on the mediation of mathematics and scientific computing, drawing on thirty years of developing interactive numerical tools at INLN and then LJAD (CNRS, Université Côte d’Azur).

My thanks to Jean-René Chazottes for his careful reading and valuable suggestions, which contributed to improving the clarity of this text.